CHRISTOPHER SEAN BATT -

Why did the Apple fall far from the tree?

There is a good reason Apple focuses all their engineering on the M-series supercomputer chips, which innocuously began as mere mobile device engines – but with Neural Processing Units (NPUs) and Random Access Memory (RAM) built into the Central Processing Unit (CPU) die, as one integrated and interconnected design.

Is it because they didn’t want to be dependent on Intel, AMD, NVIDIA, or because Steve Jobs’ ego declared “Our own super-great chips!”

I call it a trojan horse positioning itself to unleash its ‘gift’ when the modality of local device machine learning and generation becomes feasible, then mainstream, then the datacenters (what’s left of them) collapse.

The only problem is: “not quite yet” because they have to wait for the AI hyper bubble to burst, first.

Quietly. Waiting. In. The. Wings.

Ohh, and there's that pesky battery problem on mobile, prompting the most uttered statement of the last few years: "I need to charge my iPhone".

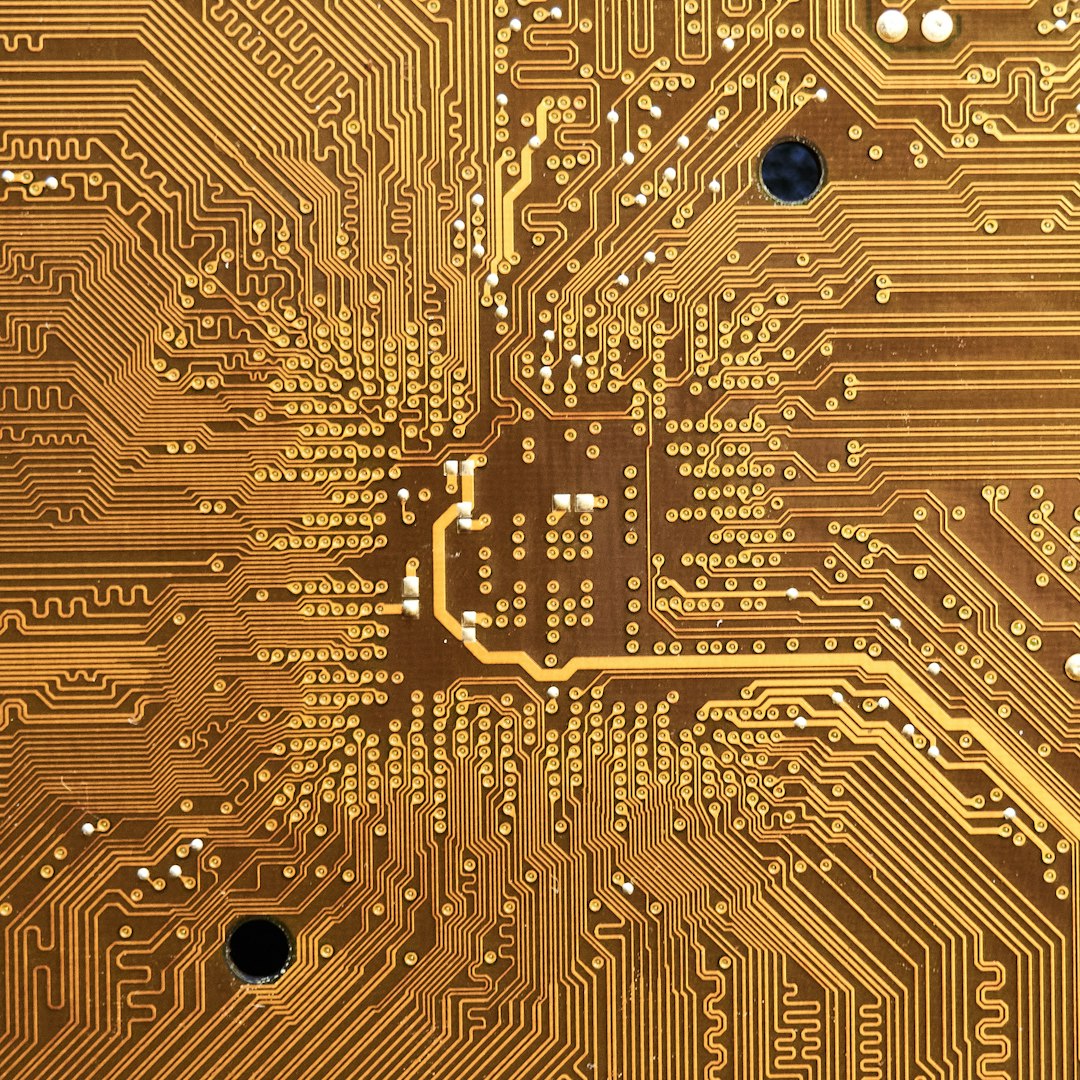

Apple M-series microprocessors.

Apple's M-series NPU+GPU+CPU powerhouses are creative works of art, and they are the power efficiency marvels of our times. Just look at a microscopic zoom into the deepest parts of the chip, and you will be so amazed at what can be done with sand and electrons.

So much so, that their chipmaking rivals had to flip the metrics and brag how much Wattage arcane GPU architectures swallow as a reference to their level of power, like a badge of honor. As if consumption was suggestive of horsepower or sexual libido for sapiophile geeks and AI datacenter gods.

But everyone informed knows that real sapiophiles are totally gay for Apple's chip architectures, which are on another level of processing effectiveness per Watt and per Mighty Dollar.

So why does Apple suck at releasing AI tools that go head-to-head with cloud-based offerings from the usual suspects?

Because they are playing the long game, and $1B to borrow a competitor's money-losing infrastructure and chatbot is chump change. Apple is actually renting AI from Google Gemini, yes. It is a temporary solution instead of buying into a sinking neighborhood. It allows them to buy time and be undistracted from their endgame of on-device Apple Intelligence.

They quietly put neural network code (machine learning algorithms actually executed by the NPU in their phones and consumer-grade laptops for everyone). Unlike many of the so-called AI models that:

- barely use the GPU efficiently,

- fight over programming interfaces like CUDA, and

- are held back by cross-architecture compatibility of software tools and compilers.

Obviously, by design, Apple has none of these problems; they are a one man show but no one trick pony.

AI Datacenters are already failing.

AI datacenters are a failed business model already, because their builders made a fatal assumption of parallels; they believed cloud compute needed to remain in the cloud as was the original datacenter model of the internet for serving the web. However, the rise of edge computing and the on-device resources we now enjoy change the whole game.

Especially when current AI models are a static database of weights that don’t change, and the sourcing of real-time data and knowledge of the world (both digital and physical) is already served by existing datacenters, which the models can reference any way they like.

There is no case for centralization of AI, unless a vendor/provider insists on absolute control, rather than making the right engineering decisions and distributing the processing load across 10B devices the users pay for themselves.

A cursory examination of the money, electricity, water, and microchip resources demanded by these companies reveals that they are choosing control over any rational scientific approach; they are not doing the technology approach based on a sound architecture, nor reading the writing on the wall when it comes to edge and on-device trends we can see today, already.

They are too invested (and so are their financial system backers) to abandon the AI bubble, which is locked into their centralized business model, and which will all fall apart soon enough.

Apple’s holding pattern.

Allowing economics to do its thing in time will sort out the wide and narrow paths for the evolution of how machine learning and AI generative technologies will be deployed most efficiently; we can sometimes rely on market economics to point the way to sanity and best fit.

Apple is ahead of the curve, quietly saying almost nothing nor hyping so-called AI, which is a clever marketing term for machine learning and neural network processing of vast amounts of data.

One day soon, when battery problems and resource hogging on consumer device NPUs are optimized, everything will be processed in some way by neural networks in every corner of Apple’s software and hardware ecosystem. From earbuds to phones to desktops.

The look of the interfaces will be determined later, based on behavioral analysis of preferences and modalities evolving. People may not prefer chatbots, but maybe branching choices prompted to them based on the context of whatever they are doing. Or maybe we will go back to some video game interfaces from the 1990s, who knows?

But there won’t be AI datacenters, just the remnants of the shrinking cloud in favor of edge and on-device computation and even data routing of autonomous objects in a peer-to-peer and quantum-secure end-to-end encryption standard.

Sure, the companies will fight amongst themselves for user data and behavioral capture, maintaining their walled-gardens as long as possible, but users and devices will ultimately win the war of decentralization.

And so we should.

– Chris ;-)